Why are AI agents a security threat?

AI agents pose real security risks: incidents of leaked credentials, risky plugins, and no transparency demand urgent defenses.

Content

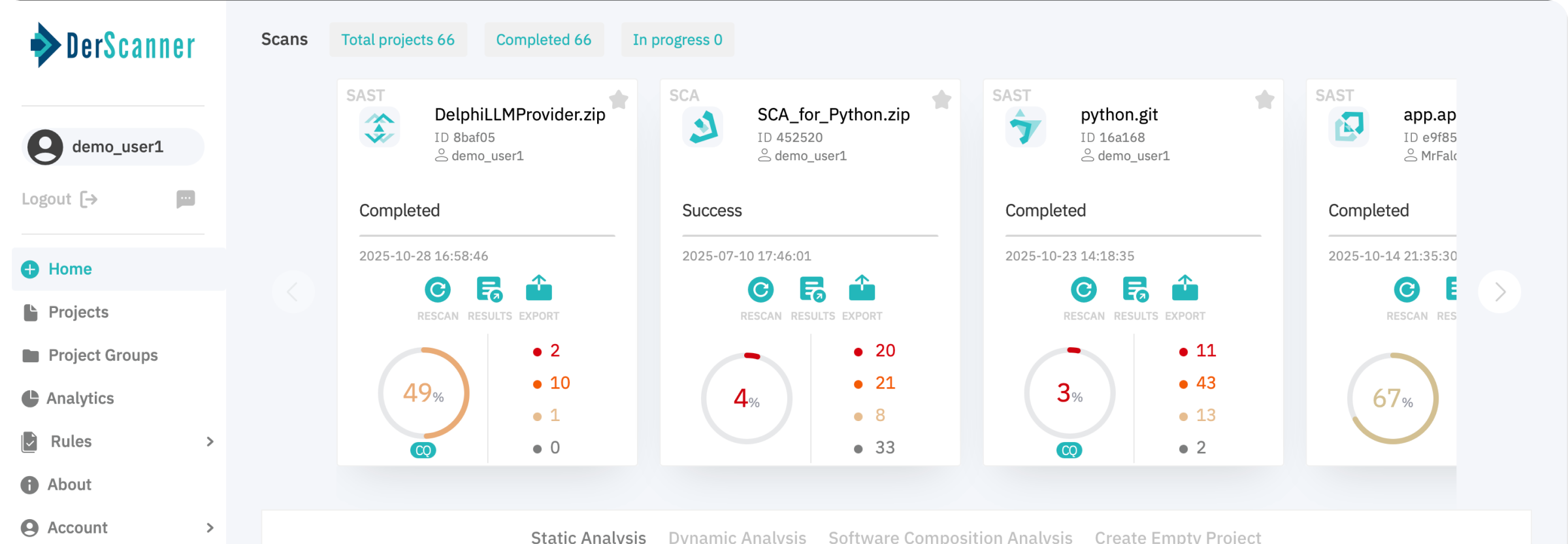

Make Your Applications Secure Today

Sign up for a personalized demo to see how DerScanner can meet your Application Security needs

Independent research is already sounding the alarm. A Dimensional Research study (via SailPoint) found that 96% of IT professionals see AI agents as an increasing risk, with 23% reporting incidents where agents were tricked into exposing credentials, and 80% citing unintended actions such as accessing systems or leaking data.

A large-scale public red-teaming effort covering 22 AI agents across 44 scenarios logged 1.8 million attack attempts, with tens of thousands successfully bypassing policies — sometimes in under ten queries.

Over-Permissioned by Design

AI agents are provisioned with broad API keys and minimal role-based access control. A code-review agent may be able to read production logs or deploy simply because it was easier to configure that way. If compromised, the attacker gains that broad access instantly — no privilege escalation needed.

Actions Without Transparency

When an AI agent performs a sensitive action, security teams often see what happened but not why. Traditional logs capture the event, but not the trigger — whether it was a prompt injection, malicious plugin, or poisoned context. Without that decision trail, malicious activity can blend into normal operations.

Plugins as Silent Attack Vectors

Every plugin, connector, or integration is effectively third-party code executed inside a business environment. Few organizations apply the same security reviews to these components as they do to traditional dependencies. Even fewer track updates or validate integrity post-deployment. This creates an unmonitored, high-trust channel for potential compromise.

No Human “Pause” Before Execution

AI agents act instantly. A single crafted prompt can trigger high-impact actions at scale — without the hesitation or judgment a human operator might have. This speed and automation work in favor of attackers once an agent’s behavior is manipulated.

What Security Teams Should Do Now

- Treat AI agents as privileged service accounts.

- Enforce least privilege and strict access segmentation.

- Log actions with full contextual data, including the originating prompt.

- Apply software supply chain reviews to every plugin and integration.

- Track metrics: policy violations, credential exposures, and unintended actions.

The adoption of AI agents is moving faster than the security models to contain them. The threat isn’t hypothetical — attackers are already exploiting this bridge between LLMs and corporate infrastructure. The only question is whether you’ll lock it down before it’s crossed.

Ready to Reduce Technical Debt and

Improve Security?

Clean code. Fewer risks. Stronger software