SAST Coverage 101: What types of vulnerabilities does SAST cover?

Static analysis (SAST) has expanded beyond classic vulnerability detection to encompass application logic, data flows, and critical configuration decisions. Today's SAST encompasses APIs, containers, infrastructure as code, cloud environments, and machine learning components, where design errors can directly impact system security. A proper understanding of SAST's scope is required for risk management and compliance in complex regulatory environments.

Content

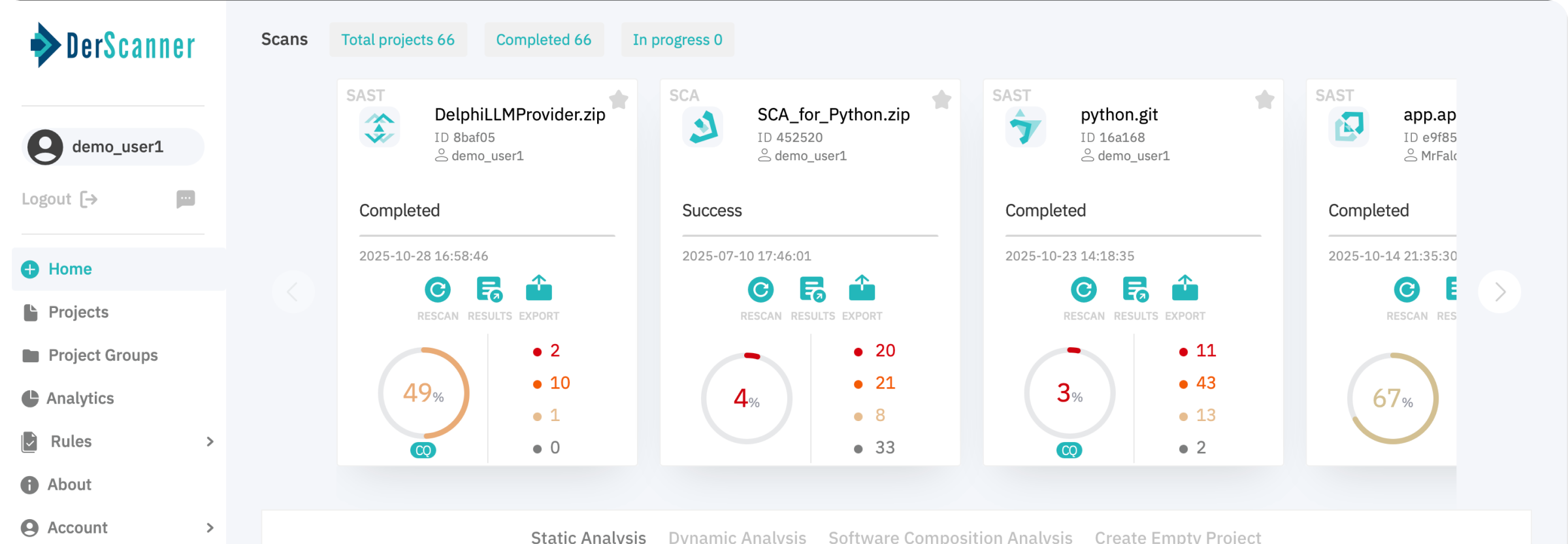

Make Your Applications Secure Today

Sign up for a personalized demo to see how DerScanner can meet your Application Security needs

Static Application Security Testing (SAST) is actively required as of 2026 by a growing number of regulatory, compliance, and industry standards — from ISO/IEC 27001 and NIST to PCI DSS, DORA, and the Cyber Resilience Act. For many organizations, SAST has ceased to be a desirable security control step; instead, it has become a requirement. As a result, expectations around SAST coverage of specific risk classes have also increased.

Not all SAST solutions cover the same classes of vulnerabilities. They focus on different software stacks, support different programming languages and architectures. Leading to a significant variability in the types of risks and misconfigurations they can detect.

Knowledge of the full range of SAST checks allows security teams to assess gaps in their current tools, justify the need for additional ones, and better comply with regulatory requirements.

We'll cover the main categories of security issues modern SAST solutions can detect — from classic application vulnerabilities to more rare kinds of risks associated with Infrastructure as a Code, Container Security, APIs, Cloud, ML, etc. We'll focus on why these checks are important, what types of risks they identify, and how they align with real-world security and compliance expectations.

Core Types of Vulnerabilities Detected by SAST tools

When reviewing SAST tool coverage, the security domain is considered, not the vulnerability name. Regulatory requirements, audits, and other internal security policies typically specify that security assessment tools will cover specific risk areas, rather than detecting identified vulnerabilities.

| Category | What is analyzed | Why this matters | Compliance |

| Application-level vulnerability | Source code, user input processing, execution logic | Protection against well-known attack vectors | ISO/IEC 27001, PCI DSS, NIST |

| Data flow and logic issues | Data distribution, boundary trust, security solutions | Prevents system vulnerabilities and destruction of sensitive data | ISO/IEC 27001, NIST, internal security policy |

| Infrastructure as Code (IaC) | Terraform, CloudFormation, ARM templates | Identifies insecure attachments early | DORA, Cyber Resilience Act, NIST |

| Container Security | Dockerfile, build configuration, base images | Identifies insecure containerization configurations that weaken application isolation and runtime security | DORA, Cyber Resilience Act |

| API Security | API handlers, authorization logic, data deletion | Ensures the security of externally accessible application interfaces | PCI DSS, ISO/IEC 27001 |

| Cloud Solution Security | Cloud SDK Usage, IAM logic in code | Prevents privilege exploitation, escalation, and misconfiguration of cloud services | ISO/IEC 27001, NIST |

| Machine Learning and Data Processing Components | Model loading, data processing methods | Mitigates emerging risks in machine learning services | Internal risk management, evolving regulations |

Application-Level Vulnerabilities

Injection Vulnerabilities

Injection vulnerabilities occur when unverified data is used in sensitive components without proper control and context. They are not tied to a specific technology, but rather arise from how applications construct commands, queries, or expressions using external input.

SAST tools find injection vulnerabilities by analyzing incoming data sources and identifying instances where validation, sanitization, or parameterization are missing or applied incorrectly. For example:

- SQL Injection

A classic example is an authentication form that accepts an email address and password. If user input is directly embedded into an SQL query instead of being passed as parameters, an attacker can manipulate the query logic. In such cases, the injected input may alter the WHERE clause so that the database returns a valid user record even without a correct password, resulting in an authentication bypass. - Command Injection

Consider an application that deletes temporary files by constructing a shell command such asrm /tmp/$filename. If user-controlled input is concatenated into the command and executed via a shell, an attacker may inject additional shell syntax to execute arbitrary commands. Depending on the execution context and permissions, this can lead to destructive actions on the system. - LDAP Injection

Requests to corporate directories (LDAP) may include usernames or groups. If the passed string is formed by simply concatenating data with unencrypted parameters, an attacker can create a filter that grants full access to the entire hierarchy. - XPath Injection

XML data is actively used to transfer data between systems. If processed without appropriate filters, it can lead to situations where an injected construct changes the semantics of the XML tree and allows data intended only for the administrator to be read.

Static analysis provides a true picture of what's happening at the source code level. Because SAST traces data flows and identifies unsafe data access patterns, it helps prevent a wide range of attacks, from simple SQL injections to complex multi-stage manipulations.

The ideal solution is a combination of automated scans and regular code reviews.

Cross-Site Scripting (XSS)

Imagine entering a comment on a forum, and a web application treats your input as actual executable code, sending it to another user's browser. This unexpected effect is called cross-site scripting, or XSS for short.

This occurs when user-entered data is included in a page's HTML code without proper sanitization or character escaping. For example, if someone decides to leave a comment like <script>alert('You've been hacked!')</script>, your page will display a message in a browser dialog box. Or worse, it will intercept the user's cookies and send them to the attacker.

The problem is simple — the browser loses the distinction between plain text and instructions for executing code. Attacks typically occur where our data is mixed in with page markup or dynamic website elements.

When does XSS occur?

Cross-site scripting attacks depend on the context in which the data is displayed. Let's look at the most pressing issues:

- HTML markup

Imagine a forum page where post and comment content is displayed. Without proper filtering, it's easy to insert working JavaScript code here. - HTML attributes

Imagine a registration form where an attacker adds theonmouseover="javascript:alert('Hacked');"attribute to the email field — just hover the mouse over it and a pop-up window appears. - JavaScript scripts and expressions

If an application collects dynamic configuration settings from the database and embeds them in front-end functionality, improperly sanitized data opens the door to malicious code injection. - Configuration objects

Front-end configuration loaded from the back-end can also be a target if the necessary security measures aren't taken.

Standard input validation is often insufficient, as it only addresses certain known attack types. This is why XSS remains a persistent threat even after major technology updates and refactorings.

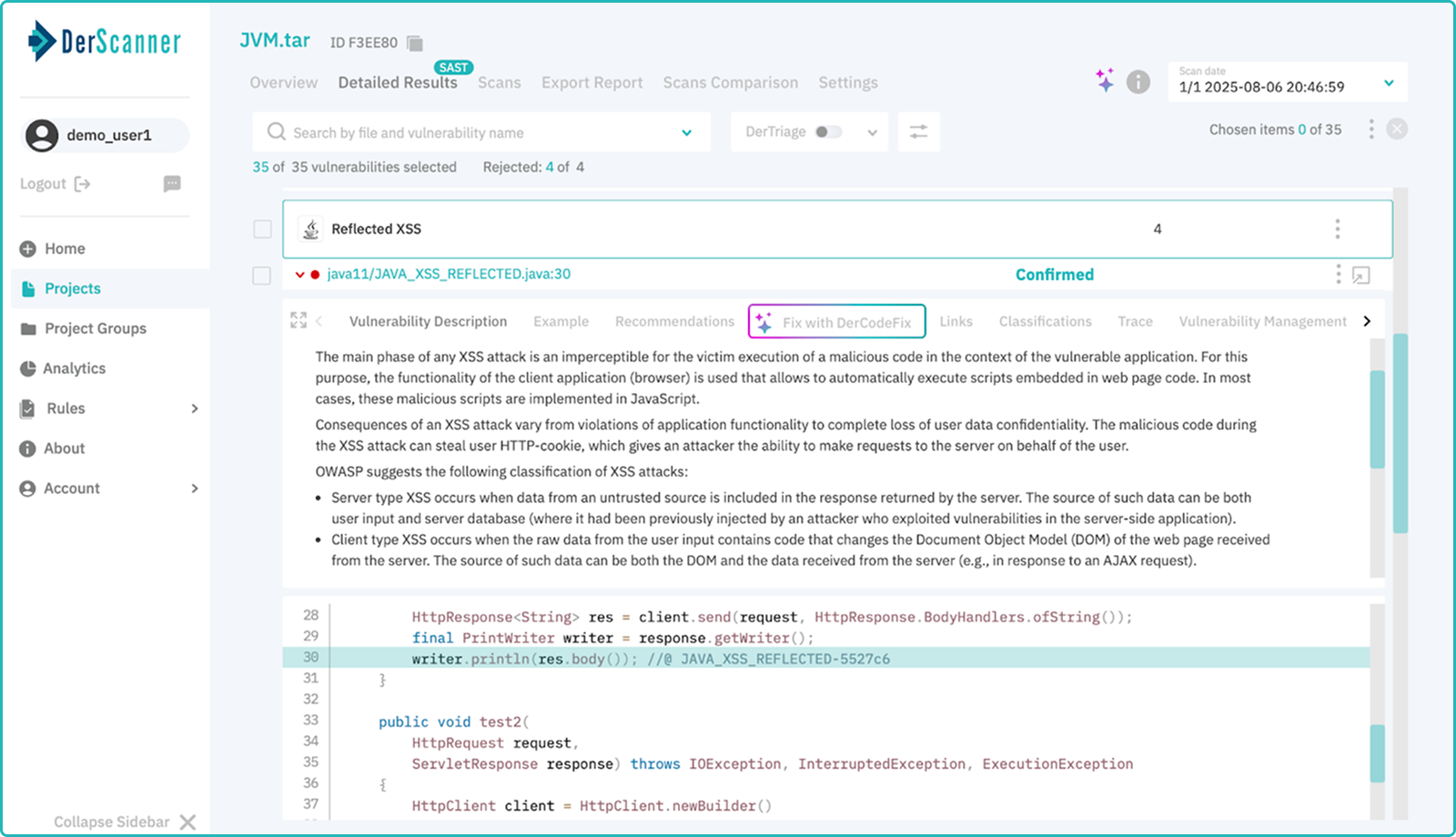

How does static analysis (SAST) help?

Cross-site scripting (XSS) primarily affects output rather than input. To detect such situations, SAST closely monitors:

- Data paths from the application's external boundary to the internal rendering or response logic

- Instances where such data is used in executable contexts (HTML, JavaScript)

- Lack of necessary filtering and escaping before display

With this approach, SAST successfully finds potentially vulnerable areas even in large and legacy projects.

Why does XSS remain a problem?

Although the concept itself has long been known, cross-site scripting (XSS) remains popular among attackers. The reason is simple: this attack allows for the almost instant hijacking of user sessions, theft of sensitive data, and impersonation of the victim.

Information security standards (such as GDPR or PCI DSS) require mandatory protection of user data, which includes the prevention of such attacks. Any serious development must consider the risks of XSS.

SAST vs XSS

The root of most XSS vector attacks lies in the application code. Failure to properly escape strings, random string combinations, and improper handling of template data create fertile ground for attack.

Therefore, static analysis is an excellent tool for early detection of problems. While full protection cannot be built solely on it, SAST provides a foundation for further strengthening. So, the best defense mechanism against XSS is careful filtering and escaping of all data passed to the user interface.

Insecure deserialization

Imagine your application works like a restaurant: a customer orders a dish, the waiter brings it, the customer eats. But suddenly, it turns out that the chef didn't check the dish's ingredients, and instead of chicken, the plate turns out to be poison!

This is roughly what happens with unauthorized deserialization. The application waits quietly for data, a packet of information arrives ("order"), and it transforms it back into a usable form — an object ready for consumption. But no one bothered to check the quality of the ingredients ("data validity"). The result is that the program starts to behave strangely, internal logic is broken, important checks are skipped, or worse, unauthorized code is executed.

The main mistake here is trusting the incoming serialized data. After all, these could be completely arbitrary objects created specifically to attack your system.

The most common mistakes

Danger can literally lurk anywhere:

- A classic: built-in object serialization

Java has its own built-in serialization system (ObjectInputStream and ObjectOutputStream), Python too ("pickle")... Almost every modern language provides a convenient implementation. However, most of them are extremely primitive: any incoming value is converted into an object without any additional checking. This is where the danger zone begins.

For example, attackers can inject a special class, executing code during its initialization. - General-purpose formats: JSON, XML, YAML

It would seem that everything is standard and convenient: we pass data in a readable format, parse it, and move on. However, the weak link lies in parser libraries. Often, when accepting objects from a format into a program, we forget to ensure that the schema matches our expectations. This means an attacker can insert an extra string, hacking your session or gaining superuser privileges.

Dynamic class creation support is especially insidious — any word in the text can be transformed into a new object with new capabilities. - Custom Serialization Formats

"Let's make it faster!" exclaims the engineer and creates his own data storage algorithm. Some want to save bytes, others are trying to maintain backward compatibility... The result is predictable: a poorly documented, opaque, and insecure tool that requires extensive maintenance.

Writing your own serialization routines is extremely dangerous. Even the slightest error can create a vulnerability allowing attackers to modify your data. - Data Caching and Storage

User sessions, temporary values in server memory, and stored data are all potential targets for attack. Through improperly executed deserialization, an attacker can change account status, spoof access rights, or steal important information.

But what are the potential risks of insecure deserialization? Here's just a small sample of what could happen:

- Website will start leaking someone else's personal data.

- Authorization will become optional, and the administrative privileges will be accessible to a regular user.

- An external source will be able to run arbitrary code on your server.

- The database will suddenly become accessible to any network user.

And the worst part is that this can be done completely unnoticed, because the vulnerability is triggered at the data loading stage, bypassing the usual security layers.

Helper Tools

The good news is that the problem can be solved! Right at the coding stage. Guess what particular solution will:

- Find dangerous sections of code that use insecure deserialization

- Indicate where unsupported or outdated serialization methods are used

- Report where there is no restriction on the types and structures of processed objects

That’s right. It’s Static Application Security Testing (SAST) Tool :)

Path Traversal & File Inclusion

It might seem that basic tasks like uploading images or downloading PDFs won't raise suspicion, but this is also where pitfalls happen. Applications often accept user data, which is used to create file paths on the server. Reading and writing files is perfectly normal, but trouble occurs when the developer expects the data to land within specified directories, and… it doesn’t.

Let’s assume the application should download a report from the /reports folder, but the user passes a path like ../../../config/secrets.txt. If path processing isn't restricted in any way, the server will return a config file containing application secrets! What can happen if database access keys are stored there...

Examples of Vulnerabilities

These vulnerabilities most often arise where file interactions are dynamic, for example:

- Endpoints for file uploads and downloads

- Report generation or document export

- Template loading or application localization

- Application configuration or third-party module integration

These areas are characterized by the gradual accumulation of exceptions and conditions, which complicates the development of a robust path restriction mechanism.

When it comes to protecting an application's file space, the first thought is to monitor the system's behavior in production. But in reality, the cause of many vulnerabilities lies deeper in the source code. Therefore, path traversal issues are easily detected by static analysis tools (SAST) — vulnerabilities become obvious when reading the code.

- First, file system access is implemented through clearly defined APIs. The analyzer sees exactly which section of code accesses which file and how exactly the path is constructed.

- Second, the path construction logic is transparent. Seeing a variable that assembles a string immediately makes it clear that you need to be careful with it.

- Finally, path traversal issues manifest themselves where path processing is not correct: there is no normalization, no fixed directory specified, no lists of allowed files.

Unlike runtime testing, static analysis does not rely on hitting the right execution path — it systematically covers all of them.

Why is this important?

Interestingly, path traversal issues persist not so much due to technical difficulties as to a misperception of the issue's importance. Developers tend to view file system access as a mere technical feature, failing to recognize that it is a potentially powerful attack vector.

This is the reason for security standards (whether ISO/IEC 27001 or PCI DSS) to emphasize the importance of a preventive approach to file access management. Crossing this boundary is considered a compliance violation.

Data Flow & Logic-Related Security Issues

Modern applications consist of numerous interconnected components moving along a complex trajectory. Every small improvement increases complexity, and security gradually erodes. The thing is — each individual part of the system appears flawless until the data crosses invisible boundaries of trust.

Usually, it all starts with a false assumption:

"This data came from our own subsystem, so it's reliable."

Later, another assumption is added:

"The service has already verified the data, so it can be trusted."

Gradually, the chain grows longer, until one day a small loophole leads to a major vulnerability.

This is how false trust is born.

Examples of Data Flow & Logic Vulnerabilities

In practice, such problems manifest themselves not in isolated points, but in persistent patterns. They most often relate to the following aspects:

| Area | Typical Failure Mode |

| Input handling | Validation applied only at the perimeter, skipped internally |

| Authorization logic | Checks enforced inconsistently across code paths |

| Sensitive data handling | Secrets and tokens reused outside their intended scope |

| Cryptography usage | Correct APIs used with insecure parameters or assumptions |

| Error handling | Internal state exposed through verbose error messages |

Simply put, logic and data flow issues are embedded deep within the application architecture. They are impossible to spot by examining individual pieces of code. On the other hand, SAST can detect such things:

- Trace the data path between different modules and methods

- Inconsistencies of security measures in the application

- Patterns that appear normal locally but pose a threat globally

Unlike runtime tests, SAST examines the potential capabilities of the application, not the current state.

Compliance and Risk Management Requirements

In standards such as ISO/IEC 27001, PCI DSS, and NIST-based standards the primary goal is to demonstrate that security measures are applied consistently and thoughtfully throughout the application lifecycle, particularly where trust boundaries are crossed and security-critical decisions are made.

Regulators and auditors expect evidence that the company controls the movement of data within the system, establishes clear trust boundaries, and maintains security measures at every stage. Auditors and regulators focus on high-level criteria related to:

- Integrity and confidentiality of data processing

- Clearly defined and maintained trust boundaries

- Continuous adherence to access control and authorization rules

- Prevention of unauthorized access to data through internal flows

Static analysis allows security professionals to thoroughly examine how security assumptions are implemented in code and whether they are fulfilled across all branches of program execution. Runtime testing is limited, examining only selected paths. Static analysis provides a complete picture of all aspects of a system's operation.

The results of logic and data flow analysis demonstrate not only the security of individual components but also the strength of the overall security strategy of the application. In a regulated environment, this level of awareness is vital to prove that security measures are actually implemented.

Configuration & Policy-Level Security Issues

Developers often believe they know every line of their code, but they underestimate the importance of configuration decisions and security policies built right into the application's core. We're not talking about classic bugs or unpatched fragments, but rather subtle settings that govern the operation of the entire system.

More broadly, configuration determines whether security is enabled, how strong it is, and what conditions weaken it. Simple checkboxes, framework settings, and overridden defaults — all of this has a huge impact on overall security.

Modern applications rely on a multitude of small settings, including:

- SSL/TLS parameters for traffic encryption

- Authentication and authorization switches

- Hard-coded values that change security policy

- Convenient shortcuts for quick framework integration

Each of these small settings, taken individually, raises no questions, but together they create a fragile ecosystem that can be easily destroyed by a single successful attack.

Common Configuration Issues

The settings may seem to work flawlessly, but here are a few examples where errors can appear.

| Type | Examples |

| Insecure TLS configurations | old protocol, weak key exchange, missing certificate |

| Weakened or disabled checks | mandatory certificate verification disabled, intentionally reduced strictness |

| Overridden flags | temporarily disabled checks forgotten by developers after rapid prototyping |

| Insufficient authentication policies | overly lenient rules or assumptions about token strength |

Such errors are easily explained by haste, backward compatibility, or a desire to simplify integration. But this results in security holes.

Configuration issues live right in the code, and SAST helps in finding them:

- Detects insecure SSL/TLS configurations

- Shows disabled or weakened security measures

- Finds gross policy overrides

- Detects hardcoded permissions

Regulatory Approval and Compliance Expectations

Regulators don't want to hear about ad hoc security measures that are only theoretically effective. Their primary concern is ensuring they are systemic, transparently managed, and enforceable over the long term.

This is emphasized by modern regulations and standards, such as ISO/IEC 27001, NIST SP 800-53, PCI DSS, DORA, and the Cyber Resilience Act. All of them insist on the active implementation of measures such as encryption, authentication, access control, and security mechanisms.

The most common issues raised during audits:

Are secure transmission and encryption parameters explicitly set, or are they accepted by default?

Are authentication and authorization checks enabled throughout?

Are security measures disabled for testing, ease of use, or compatibility with legacy technologies?

Is a company able to prove that important security mechanisms haven't been surreptitiously removed or bypassed?

From a regulator's perspective, a single insecure configuration isn't just a technical error, but an indicator of a lack of effective control management. It signals a potential disruption to the change process, a lack of accountability, and a decline in security confidence.

Small configuration changes — for example, disabling certificate validation, relaxing an authorization flag, or selecting an insecure encryption option — can dramatically impact the entire system. These subtle settings often escape the attention of functional tests, but are easily captured during compliance assessments.

Ignoring leads to the creation of "blind spots" that are difficult to detect later. Companies receive good paperwork, but in reality, security is invisibly compromised due to lax settings, conditional selection logic, and unclear exceptions. This gap between declarations and actual actions is the main reason for audit failures in regulated industries.

Architecture-Level Vulnerabilities

The modern application landscape differs from the classic monolithic style. Microservices, containers, IaC, and machine learning elements now dominate. With this architecture, a traditional security strategy based on analyzing individual blocks of code is no longer adequate to address the growing risks.

Let's use a simple analogy: applications used to be like strong castles, but now they resemble entire cities consisting of dozens of services and platforms. A single error in any one service can derail the entire system. Traditional static code analysis focused on examining each individual room of the castle, but now the city as a whole must be assessed.

This is where traditional SAST goes far beyond the source code and delves into new areas, providing end-to-end analysis of all layers of the architecture.

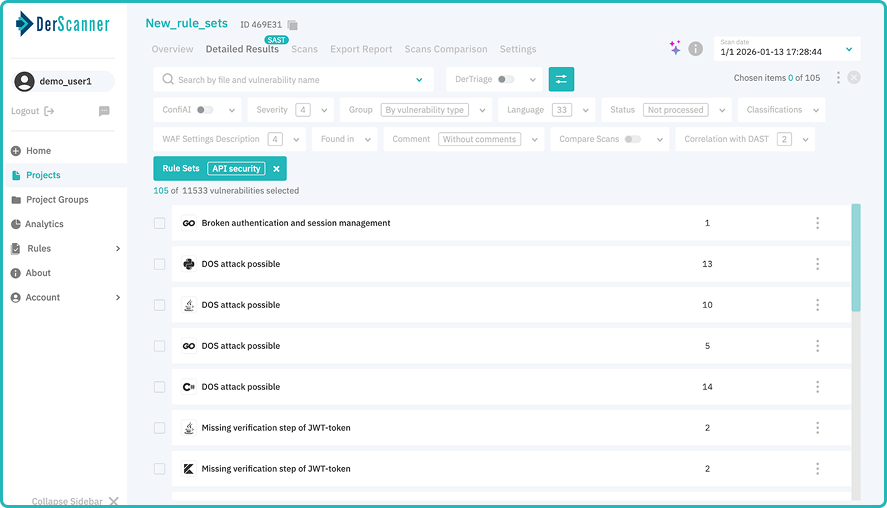

API Security

Perfectly written code is wonderful, but unfortunately, an insecure design or API misconfiguration can ruin even the most polished application. Sometimes an application seems to work perfectly until one small oversight compromises the entire system.

Static API analysis not only superficially monitors API behavior during execution but also dives deep into the API implementation at the source code level. It aims to identify issues that could negatively impact API functionality even before it goes into production.

This tool is designed to detect vulnerabilities according to the OWASP Top 10 API Security 2023. Specifically, it identifies issues that could lead to the following consequences:

- Complete compromise of the API service or underlying system — when someone could access restricted functionality or personal data. Performance degradation and denial of service (DoS) are when poor API design causes resource consumption and reduced availability.

- Sensitive data leakage is when an API allows the transmission of personal information to unauthorized parties.

- Complete API failure is when the problem is so severe that the API becomes unusable.

Early detection of such vulnerabilities provides stability, predictability, and compliance with security requirements before APIs reach users. It becomes a reliable way to prevent problems, keeping the application secure.

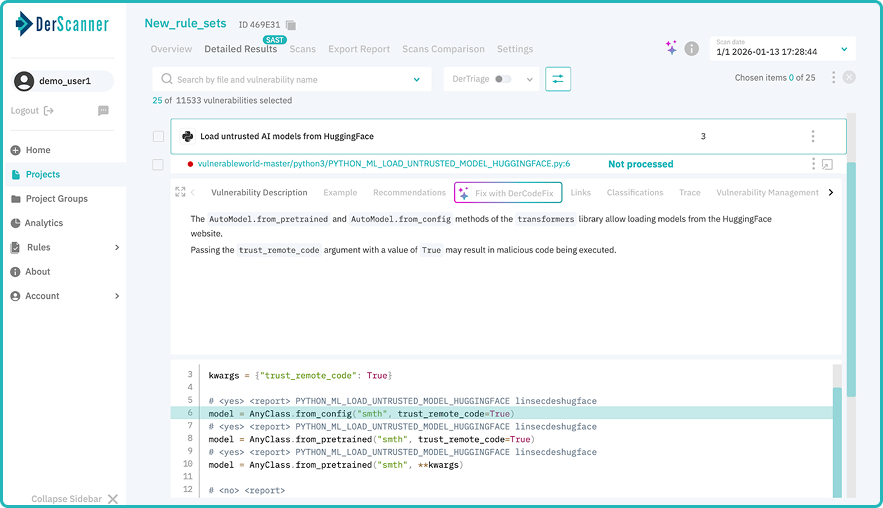

Machine Learning (ML) Security

In addition to traditional code issues, modern software faces a new type of risk — associated with machine learning (ML) components. Unlike classic bugs, ML vulnerabilities are rarely immediately apparent. Instead, they manifest themselves gradually, degrading performance, compromising data integrity, or becoming a conduit for less obvious attacks.

The primary focus when examining ML components is on the elements surrounding the model — supporting code and configurations. The purpose of a static analysis tool like DerScanner is to examine execution paths related to machine learning and identify vulnerabilities such as:

- Lack of or weak input validation. The model may accept incorrect or suspicious data, which will lead to distorted results or abnormal behavior.

- Use of unreliable or insecure models and libraries. Mistakes in model selection, as algorithms are susceptible to attackers, or the use of outdated and vulnerable libraries, are particularly critical.

- Execution parameters that reduce security or performance.

Parameter adjustments can negatively impact the accuracy and speed of the model, creating an additional risk factor. A solid approach to examining ML components allows to simultaneously address two objectives:

- Mitigating security vulnerabilities caused by insufficient attention to input data or selecting an unreliable model.

- Optimizing performance, improving the stability and speed of machine learning.

In other words, timely detection of such issues saves resources required for reworking the solution and improves the quality of the final product.

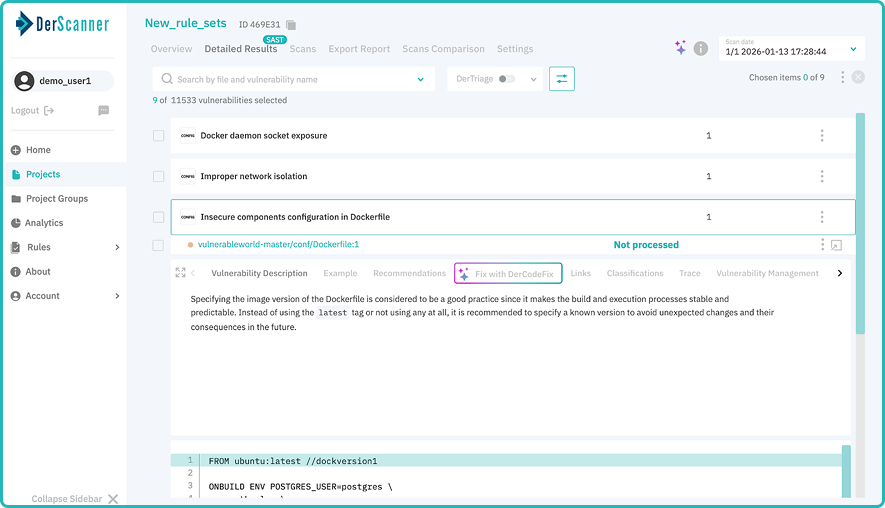

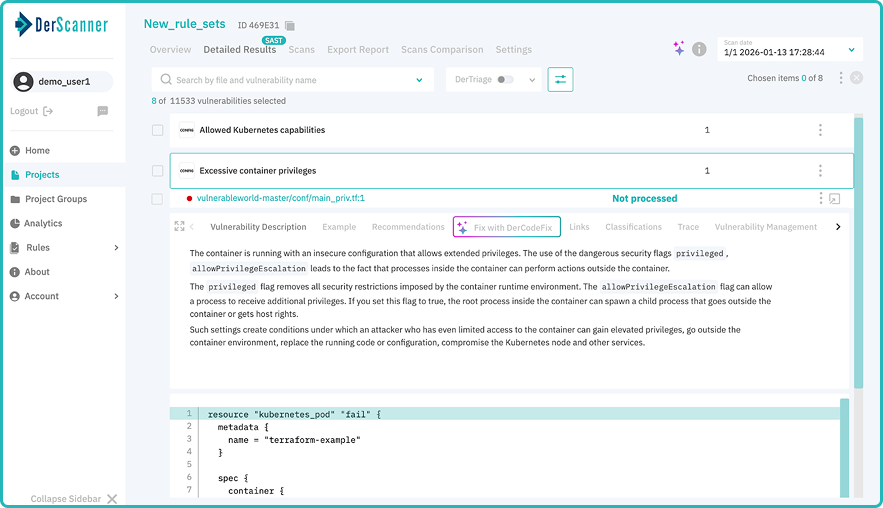

Container Security

Modern containers (Docker, Kubernetes, etc.) have revolutionized application development and delivery, shifting the focus to flexibility and efficiency. However, they have also created a new headache: containers have increased the risk of unique vulnerabilities related to isolation and security. Proper image building and container installation are key to success. However, even the slightest oversight in image build settings can significantly increase the risk of security breaches.

Containers are designed to isolate production environments, but incorrect settings violate this isolation, leaving applications vulnerable. The primary risks arise from:

- Incorrect privilege settings

For example, when a container is run with maximum root privileges, allowing anyone to make changes to any part of the system. - Injection of potentially harmful scripts

A poorly configured script added to an image during build can have a significant negative impact on security. - Exposed secrets

Including sensitive data (passwords, keys, etc.) in containers in cleartext can lead to their disclosure. - Choosing an unstable or insecure base image

Using an outdated or poorly secured parent image will create a security hole in the entire infrastructure.

The best way to combat these risks is static analysis of containers. Tools assess the security of container images during the build process, checking specific configurations and identifying the following issues:

- Least privilege violations

Containers possessing unnecessary privileges. - Potentially harmful build scripts

Adding suspicious scripts that can damage the system. - Exposed secrets

Containing passwords, tokens, and other sensitive data in images. - Using insecure base images

Outdated or unreliable base images.

By addressing these issues before deployment, development teams significantly reduce the attack surface and improve the security of containerized applications. Static analysis is a crucial step in creating reliable and secure products, saving you headaches later.

Infrastructure as Code (IaC) Security

When infrastructure is managed by code (Infrastructure as Code, IaC), configuration errors take on a particular significance. These aren't just small details — they're direct architectural decisions repeated across different environments.

DerScanner is one of the most practical tools for IaC security analysis. It inspects configuration files, such as Terraform, used to orchestrate Kubernetes clusters and related infrastructure components. The main issues include:

- Excessive or incorrect permissions assigned to cluster elements

- Missing or insecure security context settings

- Resource definitions that allow unlimited or excessive consumption

This approach ensures infrastructure security at the architectural level.

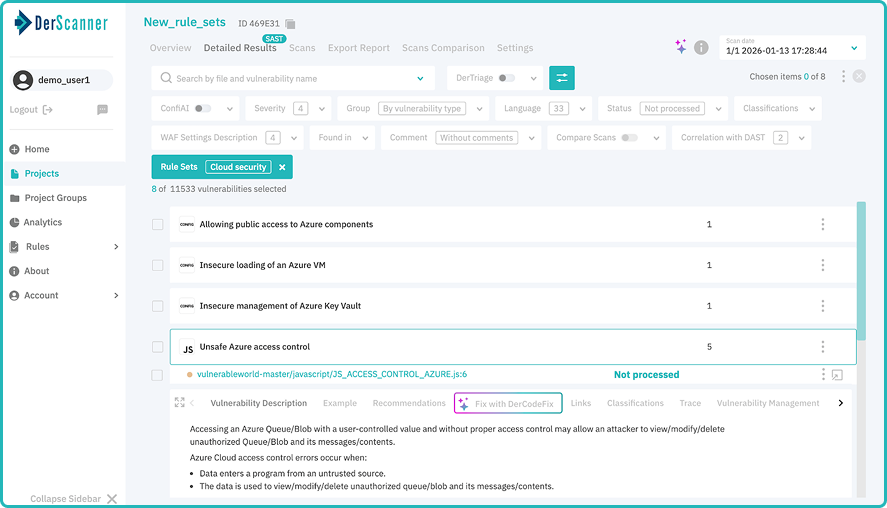

Cloud Security (Microsoft Azure)

Cloud platforms like Microsoft Azure offer extensive capabilities for protecting data and applications, but all benefits are lost if configured incorrectly. Cloud security isn't just a matter of technique; it's about informed and correct decision-making.

SAST can identify issues related to cloud configurations built into code and templates. For Azure, DerScanner analyzes the following:

- Incorrect authentication and authorization settings

- Insecure token handling, which can lead to data theft

- Disabled native Azure security mechanisms, which reduce the overall security level

- Lack of encryption for critical resources, such as databases and account storage

- Weak configurations of Azure services, which increase system vulnerability

All of this is critical in a regulated environment, where misconfiguration can be considered a direct violation of security standards and legal requirements.

What distinguishes architecture-level static analysis is not the number of findings, but the breadth of visibility it provides. By covering APIs, machine learning systems, containers, infrastructure, and cloud platforms, DerScanner delivers a unified view of security risks across the full technology stack.

This approach allows teams to reason about security as a system, rather than as a collection of isolated vulnerabilities — enabling faster development without sacrificing safety or compliance.

For organizations building complex, distributed systems, architecture-level SAST ensures that security is not bolted on after deployment, but embedded at every layer from the start.

So what?

We're used to measuring the success of static analysis (SAST) by the number of vulnerabilities found or the presence of required functionality. But as application complexity grows and the number of layers (infrastructure, logic, configuration) increases, this approach rapidly loses its value.

The true assessment of SAST's usefulness is now based on the risk levels covered by the analysis and where its capabilities end. Most threats don't exist in isolation. An error in application logic amplifies a configuration vulnerability, and an architectural flaw exacerbates both problems.

When a vulnerability appears in one area, it affects others, creating a kind of "blind spot". These blind spots are usually discovered too late — during audits, incident investigations, or regulatory inspections, when fixing the error is expensive and difficult.

Effective SAST analysis is a multi-layered risk assessment model. Good tools should support various depths of analysis:

- Application logic analysis

- Data flow analysis

- Configuration assessment

- Architectural analysis

The lack of coverage at any of these levels is a deliberate risk management decision. Advanced approaches to SAST cover all stages of development — from code and configuration analysis to monitoring of infrastructure and platform components. This strategy reduces the number of vulnerabilities and prevents the emergence of systemic risks.

Ready to Reduce Technical Debt and

Improve Security?

Clean code. Fewer risks. Stronger software