“We Scan Our Code” vs. Having an Actual AppSec Program

Most teams stall at pages of findings, with no process for deciding what to fix first. An actual AppSec program is the system that turns alerts into shipped fixes. Here are five concrete steps to build that system, without the corporate bloat or hiring ten security engineers.

Content

Make Your Applications Secure Today

Sign up for a personalized demo to see how DerScanner can meet your Application Security needs

Are we doing application security? — Yes, we run scans.

Running a scanner and having an application security program are not the same thing. A scanner produces findings. A program produces outcomes — prioritized risk, measurable remediation, compliance evidence, and a feedback loop that makes each next release safer.

The Contrast Security State of Application Security 2025 found that the average application generates around 17 new vulnerabilities per month while AppSec teams manage to fix about six. Two-thirds of organizations report vulnerability backlogs exceeding 100,000 items, with critical flaws sitting unpatched for an average of 252 days.

AppSec Program 101

Findings are triaged and prioritized

The difference between the scan and a programmatic approach is whether it’s already separated 10 findings that matter from the 490 that do not. Industry research cited by Pixee suggests false positive rates between 71 - 88% for typical scanning tools. Without triage, engineers spend time investigating alerts that turn out to be non-issues, here comes the alert fatigue.

Every finding should come with context: exploitability, reachability, business impact, and a recommended action. AI-assisted triage makes this scalable. For example, DerScanner’s DerTriage filters up to 95% of false positives before findings reach a developer.

Developers get remediation guidance

Handing a developer a PDF of scan results and saying “please fix these” is how security backlogs grow to 100,000 items. A program closes the loop by delivering remediation suggestions in the same context where the vulnerability lives — the IDE, the pull request, the pipeline output — with concrete code patches that match the project’s language and framework.

Imagine if there was a tool that would generate context-aware fixes alongside each finding. The developer would never have to leave the coding session.

Compliance reports are generated continuously

Under the EU Cyber Resilience Act, manufacturers of products with digital elements must report actively exploited vulnerabilities within 24 hours starting September 2026 — including products already on the market. That obligation is impossible to meet without continuous, archived compliance data.

A program treats compliance reports as build artifacts: generated automatically, mapped to relevant frameworks (CWE/SANS Top 25, OWASP Top 10, PCI DSS), archived per release.

For more on how regulatory deadlines intersect with SDLC practices, see our compliance deadlines breakdown.

SBOMs versioned per release

Is our product affected by this new CVE? The fast answer comes from an archived SBOM tied to the specific shipped version. The slow answer comes from reconstructing a build environment, and it can take days.

DerScanner generates SBOMs in CycloneDX format as part of every SCA scan. The program-level practice is to tag them per release and store them alongside release artifacts as a pipeline step.

There is a feedback loop between security and engineering

When the same class of SQL Injection keeps appearing in new code, it means the team might be fixing findings but not learning from them. Findings should feed back into secure coding training, code review checklists, framework choices, and architectural decisions.

The Progression

The OWASP Software Assurance Maturity Model (SAMM), which OWASP recommends in its Top 10 2025 guidance, describes it as a gradient. In practical terms, it looks something like this:

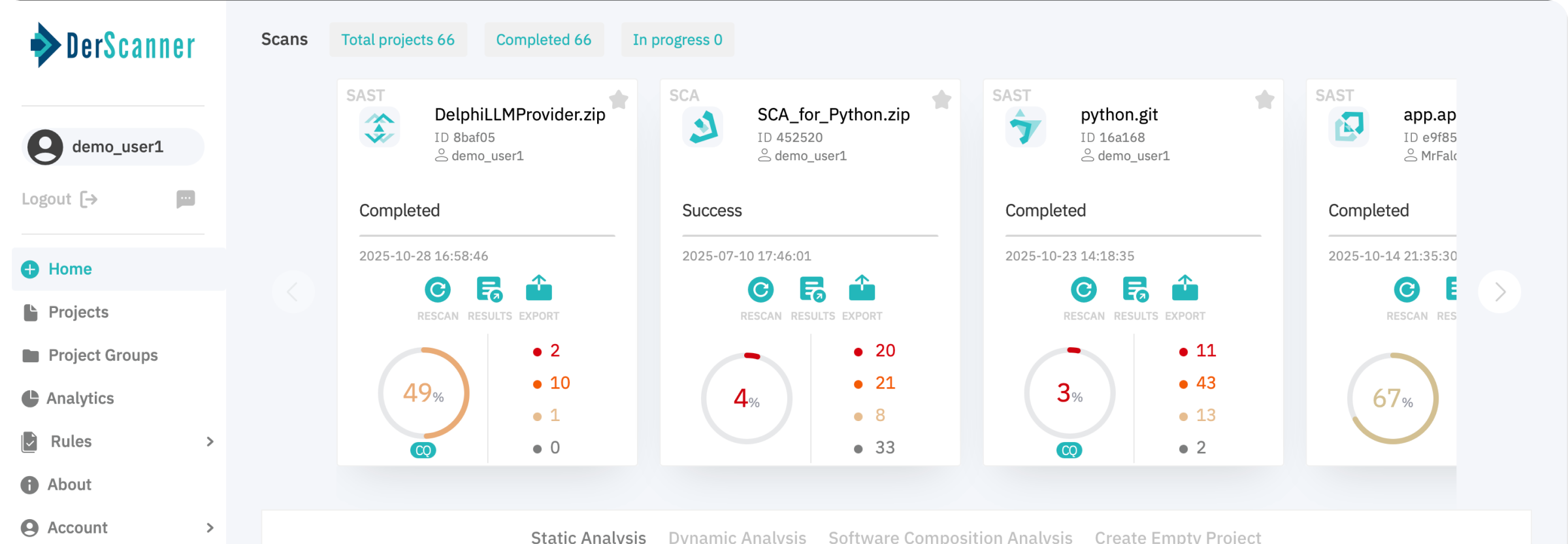

- Stage 1 — Scanning exists with a tool and someone is running it. Results live in a dashboard that few people look at.

- Stage 2 — Scanning is automated, scans run in CI/CD, and all the findings are centralized. And there is also a process for assigning ownership.

- Stage 3 — All findings are triaged, prioritized, and false positives are filtered. Developers are provided with remediation guidance. Compliance reports are generated automatically. The team has visibility into backlog trends.

- Stage 4 — The program prevents as much as it detects. Metrics include prevention rates, time-to-fix, and recurring-vulnerability rates. Security and engineering operate as a single loop.

Most mid-size organizations sit between Stages 1 and 2 and mistake it for Stage 3. The gap matters because regulators, customers, and auditors are increasingly asking questions that only a Stage 3+ program can answer.

The difference between scanning and having a program has nothing to do with buying more tools. It is important to understand and track what happens after the scan runs — whether findings turn into decisions, whether decisions turn into fixes, and whether fixes turn into fewer findings next time.

Ready to Reduce Technical Debt and

Improve Security?

Clean code. Fewer risks. Stronger software